by Milan Jankovski, Rene Troost, Edward Coster, Stedin, and Marjan Popov, Delft University of Technology, The Netherlands

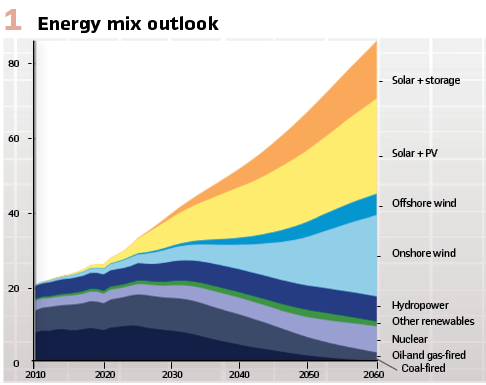

The rise and characteristics of Inverter-Based Resources: If you have attended any power system related conference lately, you have heard the words “energy transition, “uncertainty”, and “inverter-based resources” repeated over and over again. Across the globe, power systems are undergoing a fundamental transformation. Decarbonization policies are driving renewables onto the grid at an unprecedented pace, while ageing synchronous generators (SGs) are steadily phased out. This trend is illustrated in Figure 1, which shows the projected electricity production from major energy sources according to the DNV Energy Transition Outlook.

It is evident that the majority of electricity will come from renewable energy sources, while non-renewable sources are expected to decline. In the Netherlands, this trend is exemplified by TenneT’s 2GW program, which plans to install an additional 14GW of offshore wind capacity by 2035. Overall, we can expect renewable sources to become the largest producers of energy worldwide by 2050, and this transition may occur even faster in some regions. (Figure 1).

Most renewables connect to the grid via power-electronic interfaces, resulting in a growing share of inverter-based resources (IBRs). We are now witnessing the very foundation of grid behavior shift: no longer do large spinning machines dominate; rather, electronic converters dictate the tone.

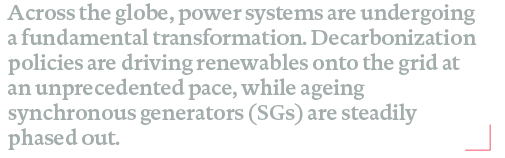

These developments introduce changes and challenges across all aspects of power system operation; however, here we focus on those that directly impact protection. First, as conventional power plants are decommissioned, the system’s inertia drops, making the grid more sensitive to rapid frequency and voltage fluctuations. Second, the nature of fault response is being fundamentally rewritten. Synchronous generators (SG) deliver high, predictable short-circuit currents based on their electromagnetic properties, but IBRs are constrained by their inverters limits and control settings – meaning the fault currents they supply are both lower and less predictable in the traditional sense. This is clearly seen in Figure 2, which shows that the fault current supplied by a Type IV (full converter) wind turbine has a maximum value that is slightly higher than its nominal value.

Finally, when fault current availability drops at the transmission level, the impact ripples all the way down to the distribution level. The result? The ‘fault signature’ that protection relays look for is changed, posing new challenges for traditional schemes that rely on current magnitude to distinguish faults from normal operation.

With all these changes come uncertainties. Synchronous generators, for decades, represented a largely uniform technology – obeying only the fundamental laws of physics, which in their nature are independent of any government policy.

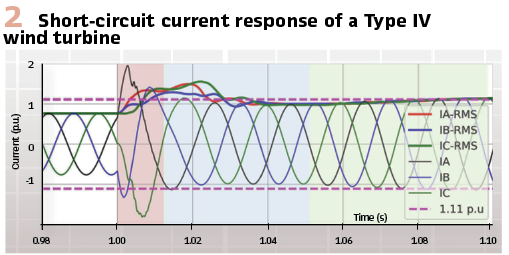

In contrast, IBRs are far from uniform. The distinction between grid-following and grid-forming controls is significant, and their responses to faults are heavily influenced by government policies and evolving grid codes – such as fault-ride-through (FRT) and low-voltage ride-through (LVRT) requirements. There are two main aspects in which these regulations differ: the minimum duration for which the IBRs must remain connected to the grid during a voltage disturbance, and the requirements for how they should support the grid during that time. As illustrated in Figure 3, these regulations can vary significantly between regions.

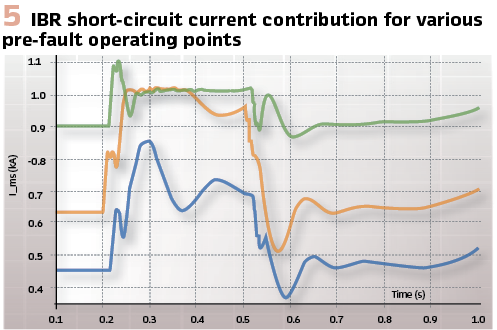

Beyond that, local network conditions and pre-fault operating points significantly affect the “amount” of current injected during a fault. Figure 5 visually illustrates this effect: it shows various fault responses from the same IBR, with the only parameter changing across scenarios being the pre-fault operating point. As shown, when an IBR operates at just 50% of its nominal power, its short-circuit current response can actually be lower than its nominal current, which can present a very serious problem for the sensitivity and security of an overcurrent relay.

Short-Circuit Realities in an IBR-Dominated World

For decades, power system protection has relied on one key assumption: synchronous generators produce large, predictable fault currents. During a short circuit, their sub-transient response can reach many times their rated current, with a consistent shape and phase relationship governed directly by the laws of electromagnetism and the network’s properties. This generous “fault margin” and predictable behavior are mainly what made traditional protection and coordination schemes robust and reliable.

Unlike synchronous generators, IBRs fundamentally shift the fault current signature. Rather than following only the rigid laws of physics, IBR fault responses are altered and tightly controlled by electronics and their controls. Semiconductor thermal limits cap the maximum current, and software determines the magnitude, shape, and timing of current injection during a disturbance. To complicate matters, grid code requirements, such as FRT and LVRT, add policy-driven constraints that can vary by country.

In practice, most grid-following IBRs restrict short-circuit current to just 1.1-1.2 times their rated value, which, as was presented in the previous sub-section, is only their maximum. Grid-forming inverters might offer a higher initial spike, but this too is limited and quickly drops to a steady-state value similar to that of grid-following inverters. Ultimately, the thermal capabilities of semiconductors will once again play a crucial role in the limited short-circuit capability of grid-forming converters. Notably, the phase angle and waveform of the injected current are set by control objectives and may differ significantly from what traditional protection expects. This is further complicated by the inconsistency regarding whether IBRs should be explicitly required to inject negative-sequence current during unbalanced faults, and if so, what specific requirements should be applied.

This narrowing of the gap between normal load current and fault current presents new problems for protection schemes. For instance, pickup sensitivity for magnitude-based relays decreases – especially at the far end of long feeders or in weak grid areas where voltage dips are modest. Directional functions may become unreliable when the fault current is both low and its angle is set by inverter controls, rather than network impedances. Selectivity margins for time-graded overcurrent schemes narrow, increasing the risk of relays under-reaching or unintentionally overlapping.

In summary, as IBR penetration increases, traditional protection approaches that rely on high, predictable fault currents are at risk of failing to deliver the security and dependability that system operators expect and require.

Uncharted Territory: Full-system fault level Impact of IBRs

Most published work examines the immediate close-by effect of an IBR – typically the tie line which connects the IBR plant to the bulk power system. These studies show that classical distance protection can mis-operate or lose sensitivity when short-circuit currents are limited and their angles are controlled rather than determined by the machine. Most research in this area focuses on phase selection and fault-direction modules, which can mis-operate when exposed to signals that do not originate from a strong, synchronous-generator-dominated grid. While many studies suggest that integrating IBRs should not adversely affect line differential protection, it is widely acknowledged that applying line differential protection universally is not practical and feasible. While this evidence is valuable, it rarely quantifies how system-wide increases in IBR penetration reduce fault levels across the entire grid.

On the distribution side, research often focuses on local DER impacts – such as bidirectional flows, blinding of protection, and altered fault signatures – while essentially assuming the transmission backbone remains “strong” and unchanged. In reality, changes at the transmission level propagate downstream and manifest at the DSO level, where most protections still rely on clear current-magnitude separation between normal operation and faults.

A critical gap remains: the industry needs a holistic view that couples the evolving short-circuit landscape in transmission networks with its consequences for protection schemes in distribution grids. The transmission and distribution grids are interconnected, so we must analyze them as such. Only then can we fully reassess sensitivity, directionality, and coordination under lower and more variable fault currents.

As the Netherlands rapidly advances along the path of the energy transition, DSOs such as Stedin do recognize the urgency of addressing this challenge. To that end, an industrial Ph.D. project – a collaboration between Stedin and TU Delft – was launched to investigate protection concepts that will be far less dependent on future fault levels.

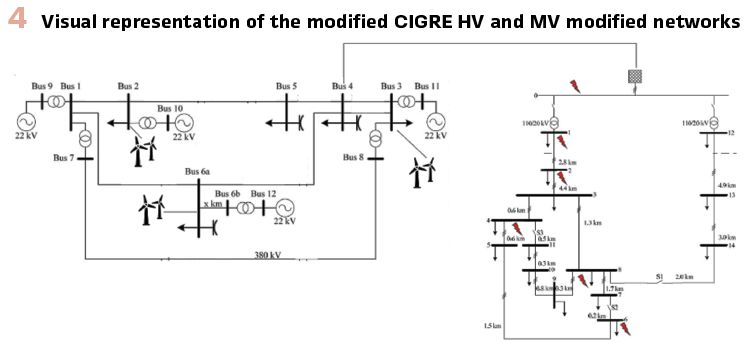

To bridge this gap and move from point-of-connection case studies to a genuinely system-wide perspective, we undertook a comprehensive analysis using the CIGRE benchmark networks at both transmission (HV) and distribution (MV) levels.

These well-established testbeds allowed us to systematically vary the generation mix – from synchronous generator dominance to high IBR penetration – and observe how short-circuit levels and protection margins evolve across the entire network. Importantly, our approach considered not only the feeder where an inverter park connects, but also remote buses – those electrically distant from the IBRs – where protection elements must still make reliable decisions as system conditions change.

In practical terms, we systematically replaced conventional plants with large wind parks operating in grid-following mode and studied a large set of short-circuit events, as show in Figure 4. This setup enabled us to assess fault responses across a range of scenarios, providing a holistic view that is often missing in literature.

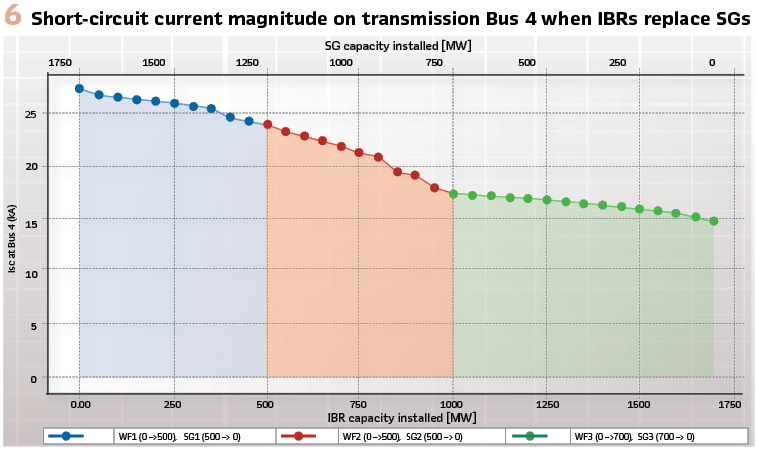

What did we discover at the transmission level? As IBR penetration increases, the aggregate short-circuit strength of the HV grid declines – sometimes gradually, but at other times in abrupt steps, depending on the relative proximity between the fault location and the IBR placement. Because IBR fault currents are strictly capped by power electronic and control constraints, the system Thevenin equivalent seen by faults becomes “weaker”: currents are lower, and greatly depend on the experience voltage dip at the IBR terminals. As presented in Figure 6, the short-circuit currents tend to decrease up to 40% when the SGs are replaced by IBRs. It should be noted that the slack bus is still modelled under the assumption of an SG-dominated external grid, which may lead to a slightly higher current contribution than expected in the future. A similar pattern was observed across all buses in the transmission grid.

Importantly, this is no longer just a local tie line effect. Buses located far from the IBR connection also experience reduced fault currents, tighter voltage depressions, and altered phase relationships compared to SG-dominated scenarios. These changes erode the “headroom” that classical protection elements rely on – distance protection at HV may lose assertiveness or require revised polarizing strategies, and backup overcurrent margins shrink. This finding highlights the need to re-examine protection settings and coordination as the system transitions to higher IBR shares.

However, what was more interesting from our perspective was how this trend plays out at the distribution level. The drop in HV short-circuit strength propagates downstream. As the transmission backbone weakens, MV feeders experience lower and more variable fault currents – even before considering local DERs and bi-directional power flows. This is a concern because most distribution protection schemes are fundamentally magnitude-based: pickup settings, grading intervals, and time-current coordination all depend on a robust gap between normal load and fault current.

As this gap narrows, sensitivity at the end of feeders deteriorates, directional decisions can become ambiguous, and coordination windows become dangerously tight – especially in weak or lightly loaded areas. This underscores the importance of considering both transmission and distribution level trends when assessing protection performance in evolving networks.

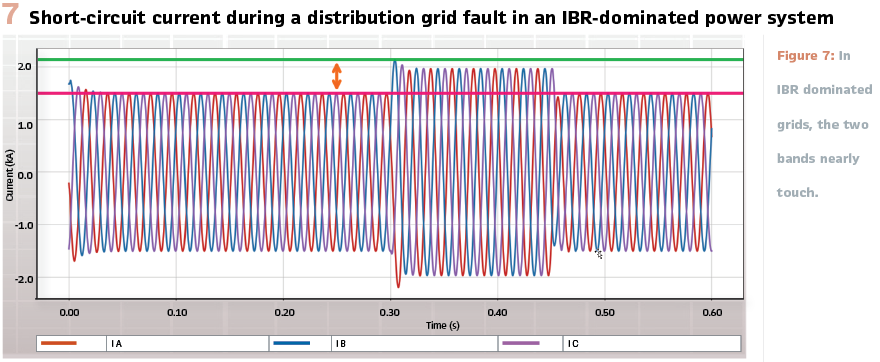

Why is this a problem? Imagine a simple situation: the MV feeder’s normal load current band sits just slightly below the prospective fault current band. In SG-dominated grids, the fault band towers several per unit above the load band. But in IBR-dominated grids, the two bands nearly touch. Take a look, for example, at Figure 7, where the current measured directly after the transformer at the distribution level is shown, and a 3-phase fault occurs on a feeder further down in the network.

This proximity dramatically increases the risk of under-reaching for pickup elements, forces compromise in relay grading (to avoid overlaps) and raises the probability of blinding-especially under certain voltage profiles or loading conditions The message is clear: rising IBR penetration compresses protection margins, making it much harder to ensure secure, dependable operation.

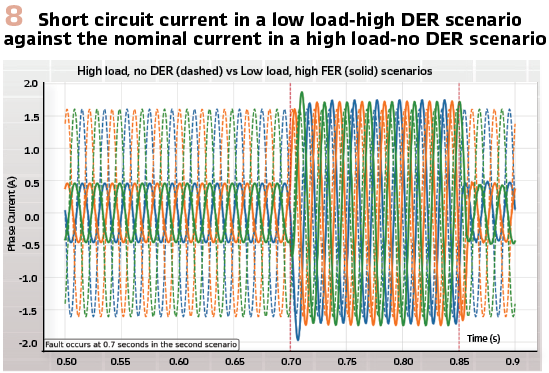

Adding DERs – whether synchronous generator‑based or inverter‑based – on MV feeders amplifies these trends and introduces further protection challenges. When local generation is high while the transmission backbone offers low short-circuit strength, the net fault current at the feeder end can be surprisingly small. This scenario erodes pickup sensitivity for overcurrent elements, complicates directionality and compresses selectivity margins across both upstream and downstream devices.

Conversely, when local generation is low and demand is high, such as during evening peaks or periods of low solar irradiance, the absolute current in the feeder may increase. Yet coordination remains fragile because HV short-circuit strength remains low. The margin between load and fault current narrows. As a result, backup elements may time out or clear too late, and transformer through-fault stress can accumulate. Figure 8 illustrates two different scenarios in the distribution grid. The dashed line represents a normal operational situation characterized by a high load and no active DER. In contrast, the solid line depicts a situation with a lower load and increased DER injection, during which a fault occurs. Notably, the nominal current in the first scenario nearly matches the short-circuit current observed in the second scenario. Taken together, these scenarios underline both the technical complexity and the urgency for utilities to harden protection against low and variable fault levels.

Looking toward the Future

How should protection evolve as the grid itself changes? This fundamental question sits beneath many conference debates and papers.

The discussion typically falls into three camps. The first path is to emulate the short-circuit currents of the past, allowing existing protection philosophies and settings to persist, which can be achieved by reshaping grid codes and hardware capabilities, as well as adding synchronous compensators.

While this offers continuity, it risks treating symptoms rather than addressing root causes. Power-electronic devices have genuine thermal limits. Requiring ‘machine-like’ fault injections can be costly, inconsistently implemented, and sometimes at odds with broader system objectives like efficiency, stability, and safety. This approach assumes the old paradigm was perfect and that protection should stay the same even as everything else changes – an increasingly difficult position to justify.

A second path is to lean into AI and machine learning for decision-making in protection. There is real promise here: pattern recognition across complex operating states, adaptive behavior in the face of variability, and the ability to fuse diverse signals. Yet, we must be realistic about the constraints. Protection decisions demand determinism, bounded latency, and explainability – operators must be able to trace why a trip was issued. Models are only as good as the dataset used to train them, and future grids may look very different from the current patterns.

Can we guarantee that the training datasets will realistically represent the phenomena that can occur in the future? Without rigorous engineering wrappers – such as formal verification, runtime guards, and conservative fallback logic – AI/ML alone is not the silver bullet, even though lately it is proposed as such across different industries. The third path, and the stance we advocate, is to rethink protection concepts so they no longer depend on the nature of the short-circuit current source or at least minimize this effect. In practice, this means defining a source-agnostic concept – not designed solely around the idea of synchronous or inverter-based systems. This is exactly how we intend to continue this Ph.D. project – by proposing solutions to the problems we can clearly see arriving. A promising solution involves leveraging incremental (time-domain) quantities, which, in theory, are influenced more by the network characteristics than by the nature of the current source.

There are already papers and studies that investigate the effectiveness of the incremental quantities on the feeders connecting the IBRs to the bulk power system. However, the effectiveness of the incremental quantities in short, cable-dominated distribution grids remains an open and crucial question for this Ph.D. project.

A significant challenge is adjusting these measurements to achieve both selectivity and security in distribution protection. This requires innovative methods to extend the useful information gathered from incremental quantities beyond the typical one or two cycles. By doing so, we can increase the use of incremental quantities while maintaining selectivity margins and rules.

To successfully implement these new protection concepts, we must critically reassess and refine our PAC infrastructure to ensure it can efficiently and securely meet the demands of the future power systems. Moreover, we must engage in a thorough evaluation of our testing strategies, recognizing their effectiveness and relevance as we adapt to evolving technologies and methodologies.

In this way, we aim to present fully wrapped-up research on the protection concepts in the future distribution grids- beginning with the anticipated challenges discussed in this article, and progressing toward new protection philosophies, along with a vision for translating these concepts into feasible practical solutions.

Our objective with this work is not to assert exclusivity, but rather to clarify the issue and outline potential solutions. Some readers may focus on enhancing fault emulation through grid-forming capabilities or synchronous compensators. Others might experiment with AI and machine learning protection concepts while adhering to strict engineering constraints.

Many will investigate source-agnostic solutions that aim to improve sensitivity and selectivity for more variable fault levels. The destination is shared: a safe, selective, and dependable protection system for a power grid in transition. The measure of success is simple – faults cleared fast and correctly, lights kept on. Achieving this goal will require open collaboration across TSOs, DSOs, vendors, academia, and policy makers. The time to act is now – by piloting new concepts, sharing data, and refining standards, we can ensure protection keeps pace with the grid’s evolution. If we embrace change where it helps and harden fundamentals where it matters, we can reach that destination before variability becomes vulnerability.

Biographies;

Milan Jankovski earned his BSc in Electrical Engineering from Ss. Cyril and Methodius University in Skopje in 2019 and his MSc (cum laude) from TU Delft in 2022. He then joined Stedin as a protection engineer, focusing on PAC configuration and testing, as well as short-circuit studies. In 2024, he began an industrial PhD with Stedin and TU Delft, researching protection concepts for future distribution grids with a heavy influence from renewables. He is an active member of CIGRE B5 Netherlands. His main interests include protection studies, renewables, power system analysis, and PAC systems.

Rene Troost works as a Grid Strategist at Stedin, where he is responsible for Substation Automation policy and provides strategic direction within the grid control domain. Beyond Stedin, he actively participates in and leads national initiatives. As Dutch CIGRE B5 representative, he represents the Netherlands internationally. René is an active member of IEC TC57 working groups 10, 17, and 19, a CIGRE Distinguished Member, and serves on the Advisory Board of PAC World and the Board of Directors of the UCA International Users Group.

Marjan Popov is a professor at Delft University of Technology. His research interests include intelligent protection, system integrity protection schemes, and transient phenomena in power systems. He received the national Hidde Nijland Prize in 2010, the IEEE PES Paper Award, and the IEEE Switchgear Committee Award in 2011.

Edward Coster works at Stedin as a network strategist. At Stedin he is responsible for long term grid planning of Hight and Medium Voltage grids. Besides that, he also defines design rules for designing and planning Medium Voltage grids. He obtained an MSc. Degree in electrical engineering at the TU Delft in 2000. In 2010, he earned his PhD degree from TU Eindhoven.